引言

在数据为王的时代,技术与金融的深度融合正在重新定义着投资的边界。还记得十年前,我们在电脑前盯着股票K线图,手动计算各种技术指标,凭借经验和直觉做出投资决策的日子吗?如今,人工智能的浪潮正在彻底改变这一切。

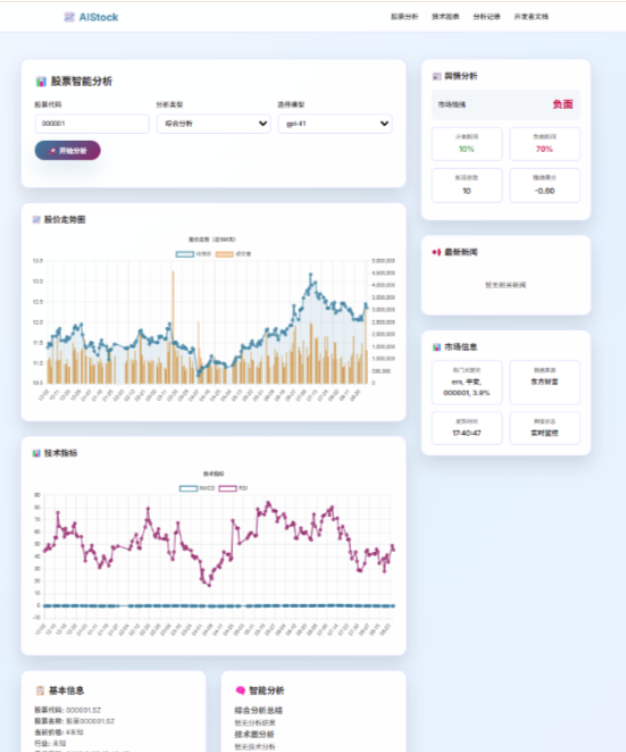

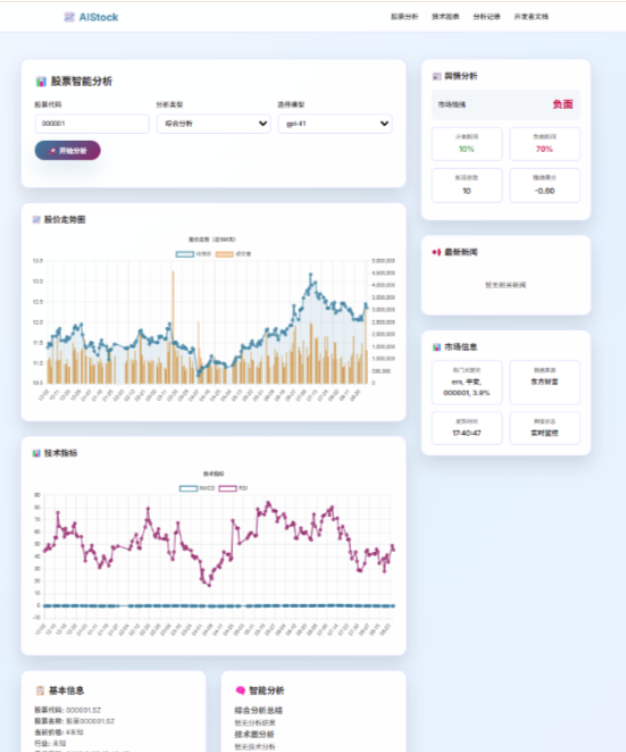

想象一下,有这样一个系统:它能够自动收集A股市场的实时数据,运用复杂的技术指标进行分析,利用大语言模型提供智能预测,并通过直观的Web界面展示所有分析结果。更重要的是,这个系统是完全开源的,任何人都可以获取、学习和改进。

这就是我们今天要深度解析的AIStock系统。作为一名在金融科技领域摸爬滚打多年的技术人,我被这个项目的设计理念和技术实现深深吸引。它不仅展示了现代Python生态在金融数据分析领域的强大能力,更重要的是,它为我们展示了AI如何真正赋能传统金融分析。

本文将从系统架构、关键技术实现、部署运维、应用案例、开发指南等多个维度,深入剖析AIStock系统的技术内核,为读者呈现一个完整的AI驱动金融分析系统的技术全貌。

第一章:系统架构设计

1.1 整体设计原理

AIStock系统采用了经典的分层架构设计,从底层的数据采集到上层的AI分析,每一层都有其明确的职责和边界。这种设计不仅保证了系统的可维护性,更为后续的功能扩展奠定了坚实的基础。

┌─────────────────────────────────────────────────────────────┐

│ Web展示层 (Flask/Vue.js) │

├─────────────────────────────────────────────────────────────┤

│ AI分析层 (LLM Integration) │

├─────────────────────────────────────────────────────────────┤

│ 计算层 (Technical Indicators) │

├─────────────────────────────────────────────────────────────┤

│ 数据层 (SQLite + Cache) │

├─────────────────────────────────────────────────────────────┤

│ 数据源层 (AKShare + Tushare) │

└─────────────────────────────────────────────────────────────┘

技术选型依据分析:

- 数据源层:选择AKShare和Tushare的双重保障策略

- AKShare:免费开源,数据覆盖全面

- Tushare:专业金融数据接口,数据质量高

- 双源策略确保数据获取的稳定性和可靠性

- 数据存储层:SQLite的智慧选择

- 零配置部署,降低系统复杂度

- 文件化存储,便于数据备份和迁移

- ACID事务特性,保证数据一致性

- 对于个人投资者场景,性能完全满足需求

- 计算层:基于Pandas的高效数据处理

- 向量化计算,处理大规模时间序列数据

- 丰富的金融指标计算库支持

- 内存优化的数据结构设计

- AI分析层:多模型支持的灵活架构

- 支持OpenAI、DeepSeek等多种大语言模型

- 本地化分析能力,降低API调用成本

- 可插拔的模型接口设计

1.2 核心组件解析

数据处理引擎

数据处理引擎是整个系统的基石,负责从多个数据源获取、清洗、存储股票数据。其核心设计理念是"容错优先,性能兼顾"。

class DataEngine:

"""数据处理引擎核心类"""

def __init__(self, db_path="./data/stock_data.db"):

self.db_path = db_path

self.conn = sqlite3.connect(db_path, check_same_thread=False)

self.lock = threading.Lock()

def fetch_stock_data(self, symbol, start_date, end_date):

"""

多数据源股票数据获取策略

优先使用Tushare,失败时自动降级到AKShare

"""

try:

if self.tushare_available:

df = self._fetch_from_tushare(symbol, start_date, end_date)

if not df.empty:

return self._standardize_data(df, 'tushare')

except Exception as e:

logger.warning(f"Tushare数据获取失败: {e}")

try:

df = self._fetch_from_akshare(symbol, start_date, end_date)

return self._standardize_data(df, 'akshare')

except Exception as e:

logger.error(f"AKShare数据获取失败: {e}")

raise DataFetchError(f"所有数据源均不可用: {symbol}")

def _standardize_data(self, df, source):

"""

数据标准化处理

统一不同数据源的字段格式和数据类型

"""

if source == 'tushare':

df = df.rename(columns={

'ts_code': 'symbol',

'trade_date': 'date',

'vol': 'volume'

})

elif source == 'akshare':

df = df.rename(columns={

'日期': 'date',

'开盘': 'open',

'收盘': 'close',

'最高': 'high',

'最低': 'low',

'成交量': 'volume'

})

df['date'] = pd.to_datetime(df['date'])

numeric_columns = ['open', 'close', 'high', 'low', 'volume']

df[numeric_columns] = df[numeric_columns].astype(float)

return df.sort_values('date').reset_index(drop=True)

预测模型核心算法

预测模型是系统的智能核心,结合传统技术分析和现代AI能力,为投资决策提供多维度的分析视角。

class PredictionEngine:

"""预测引擎核心类"""

def __init__(self, model_config=None):

self.technical_analyzer = TechnicalAnalyzer()

self.llm_analyzer = LLMAnalyzer()

self.ensemble_weights = model_config.get('weights', {

'technical': 0.4,

'sentiment': 0.3,

'llm': 0.3

})

def comprehensive_analysis(self, symbol, days=30):

"""

综合分析方法

整合技术分析、情感分析和AI分析的结果

"""

stock_data = self.data_engine.get_stock_data(symbol, days)

technical_signals = self.technical_analyzer.analyze(stock_data)

ai_analysis = self.llm_analyzer.analyze_stock(

stock_data,

analysis_type='comprehensive'

)

prediction = self._ensemble_prediction(

technical_signals,

ai_analysis

)

return {

'symbol': symbol,

'prediction': prediction,

'confidence': self._calculate_confidence(technical_signals, ai_analysis),

'technical_analysis': technical_signals,

'ai_analysis': ai_analysis,

'timestamp': datetime.now().isoformat()

}

def _ensemble_prediction(self, technical_signals, ai_analysis):

"""

集成学习预测方法

基于加权平均的多模型融合策略

"""

tech_score = self._normalize_technical_score(technical_signals)

ai_score = self._extract_ai_score(ai_analysis)

final_score = (

tech_score * self.ensemble_weights['technical'] +

ai_score * self.ensemble_weights['llm']

)

if final_score > 0.6:

return 'STRONG_BUY'

elif final_score > 0.3:

return 'BUY'

elif final_score > -0.3:

return 'HOLD'

elif final_score > -0.6:

return 'SELL'

else:

return 'STRONG_SELL'

第二章:关键技术实现

2.1 数据处理流程

数据处理是整个系统的生命线,其质量直接影响后续分析的准确性。AIStock系统在数据处理方面展现了工程化的严谨性和技术的先进性。

数据清洗与特征工程

class DataProcessor:

"""数据处理器 - 负责数据清洗和特征工程"""

def __init__(self):

self.outlier_detector = IsolationForest(contamination=0.1)

self.scaler = StandardScaler()

def clean_and_engineer_features(self, df):

"""

数据清洗和特征工程主流程

"""

df = self._quality_check(df)

df = self._handle_outliers(df)

df = self._handle_missing_values(df)

df = self._feature_engineering(df)

return df

def _quality_check(self, df):

"""数据质量检查"""

required_columns = ['open', 'close', 'high', 'low', 'volume']

missing_columns = [col for col in required_columns if col not in df.columns]

if missing_columns:

raise ValueError(f"缺少必要字段: {missing_columns}")

invalid_rows = df[

(df['high'] < df['low']) |

(df['high'] < df['close']) |

(df['low'] > df['close']) |

(df['volume'] < 0)

]

if not invalid_rows.empty:

logger.warning(f"发现{len(invalid_rows)}行逻辑异常数据,已自动修复")

df = df.drop(invalid_rows.index)

return df

def _handle_outliers(self, df):

"""异常值检测和处理"""

price_features = ['open', 'close', 'high', 'low']

outliers = self.outlier_detector.fit_predict(df[price_features])

df['is_outlier'] = outliers == -1

for col in price_features:

outlier_mask = df['is_outlier']

if outlier_mask.any():

df.loc[outlier_mask, col] = df[col].rolling(

window=5, center=True, min_periods=1

).mean()[outlier_mask]

return df

def _feature_engineering(self, df):

"""特征工程 - 构建技术指标和衍生特征"""

df['price_change'] = df['close'].pct_change()

df['price_range'] = (df['high'] - df['low']) / df['close']

df['upper_shadow'] = (df['high'] - df[['open', 'close']].max(axis=1)) / df['close']

df['lower_shadow'] = (df[['open', 'close']].min(axis=1) - df['low']) / df['close']

for window in [5, 10, 20, 30, 60]:

df[f'MA{window}'] = df['close'].rolling(window=window).mean()

df[f'MA{window}_ratio'] = df['close'] / df[f'MA{window}'] - 1

df = self._calculate_macd(df)

df = self._calculate_rsi(df)

df = self._calculate_bollinger_bands(df)

df = self._calculate_kdj(df)

df = self._calculate_volume_indicators(df)

return df

def _calculate_macd(self, df, fast=12, slow=26, signal=9):

"""MACD指标计算"""

ema_fast = df['close'].ewm(span=fast, adjust=False).mean()

ema_slow = df['close'].ewm(span=slow, adjust=False).mean()

df['MACD'] = ema_fast - ema_slow

df['MACD_signal'] = df['MACD'].ewm(span=signal, adjust=False).mean()

df['MACD_hist'] = df['MACD'] - df['MACD_signal']

df['MACD_bullish'] = (

(df['MACD'] > df['MACD_signal']) &

(df['MACD'].shift(1) <= df['MACD_signal'].shift(1))

)

df['MACD_bearish'] = (

(df['MACD'] < df['MACD_signal']) &

(df['MACD'].shift(1) >= df['MACD_signal'].shift(1))

)

return df

def _calculate_rsi(self, df, window=14):

"""RSI指标计算"""

delta = df['close'].diff()

gain = (delta.where(delta > 0, 0)).rolling(window=window).mean()

loss = (-delta.where(delta < 0, 0)).rolling(window=window).mean()

rs = gain / loss

df['RSI'] = 100 - (100 / (1 + rs))

df['RSI_overbought'] = df['RSI'] > 70

df['RSI_oversold'] = df['RSI'] < 30

return df

关键算法实现逻辑

技术指标的计算是金融分析的核心,每个指标都蕴含着深刻的市场理论基础。

def _calculate_kdj(self, df, k_period=9, d_period=3, j_period=3):

"""

KDJ指标计算 - 随机指标的精确实现

KDJ是技术分析中最重要的摆动指标之一

"""

low_min = df['low'].rolling(window=k_period).min()

high_max = df['high'].rolling(window=k_period).max()

rsv = 100 * ((df['close'] - low_min) / (high_max - low_min))

k_values = [50]

d_values = [50]

for i in range(1, len(df)):

if pd.isna(rsv.iloc[i]):

k_val = k_values[-1]

else:

k_val = (2/3) * k_values[-1] + (1/3) * rsv.iloc[i]

d_val = (2/3) * d_values[-1] + (1/3) * k_val

k_values.append(k_val)

d_values.append(d_val)

j_values = [3 * k - 2 * d for k, d in zip(k_values, d_values)]

df['KDJ_K'] = k_values

df['KDJ_D'] = d_values

df['KDJ_J'] = j_values

df['KDJ_golden_cross'] = (

(df['KDJ_K'] > df['KDJ_D']) &

(df['KDJ_K'].shift(1) <= df['KDJ_D'].shift(1)) &

(df['KDJ_K'] < 80)

)

df['KDJ_death_cross'] = (

(df['KDJ_K'] < df['KDJ_D']) &

(df['KDJ_K'].shift(1) >= df['KDJ_D'].shift(1)) &

(df['KDJ_K'] > 20)

)

return df

def _calculate_volume_indicators(self, df):

"""成交量指标计算"""

df['volume_ma5'] = df['volume'].rolling(window=5).mean()

df['volume_ma10'] = df['volume'].rolling(window=10).mean()

df['volume_ratio'] = df['volume'] / df['volume_ma5']

obv = [0]

for i in range(1, len(df)):

if df['close'].iloc[i] > df['close'].iloc[i-1]:

obv.append(obv[-1] + df['volume'].iloc[i])

elif df['close'].iloc[i] < df['close'].iloc[i-1]:

obv.append(obv[-1] - df['volume'].iloc[i])

else:

obv.append(obv[-1])

df['OBV'] = obv

df['price_volume_divergence'] = self._detect_price_volume_divergence(df)

return df

def _detect_price_volume_divergence(self, df, window=20):

"""价量背离检测算法"""

price_trend = df['close'].rolling(window=window).apply(

lambda x: np.polyfit(range(len(x)), x, 1)[0]

)

volume_trend = df['volume'].rolling(window=window).apply(

lambda x: np.polyfit(range(len(x)), x, 1)[0]

)

bullish_divergence = (price_trend < 0) & (volume_trend > 0)

bearish_divergence = (price_trend > 0) & (volume_trend < 0)

divergence = pd.Series(0, index=df.index)

divergence[bullish_divergence] = 1

divergence[bearish_divergence] = -1

return divergence

2.2 预测模型构建

预测模型是AIStock系统的核心竞争力,它将传统的技术分析与现代AI技术完美融合,为投资决策提供科学依据。

机器学习模型训练过程

class MLModelTrainer:

"""机器学习模型训练器"""

def __init__(self, model_config=None):

self.config = model_config or self._default_config()

self.models = {}

self.feature_selector = SelectKBest(f_regression, k=20)

self.scaler = StandardScaler()

def train_ensemble_model(self, training_data):

"""

集成模型训练

使用多种算法构建预测模型集合

"""

X, y = self._prepare_training_data(training_data)

X_selected = self.feature_selector.fit_transform(X, y)

X_scaled = self.scaler.fit_transform(X_selected)

base_models = {

'random_forest': RandomForestRegressor(

n_estimators=100,

max_depth=10,

random_state=42

),

'gradient_boosting': GradientBoostingRegressor(

n_estimators=100,

learning_rate=0.1,

max_depth=6,

random_state=42

),

'svm': SVR(

kernel='rbf',

C=1.0,

gamma='scale'

),

'neural_network': MLPRegressor(

hidden_layer_sizes=(100, 50),

activation='relu',

solver='adam',

random_state=42

)

}

trained_models = {}

model_scores = {}

for name, model in base_models.items():

tscv = TimeSeriesSplit(n_splits=5)

scores = cross_val_score(

model, X_scaled, y,

cv=tscv,

scoring='neg_mean_squared_error'

)

model.fit(X_scaled, y)

trained_models[name] = model

model_scores[name] = -scores.mean()

logger.info(f"{name} 模型训练完成,MSE: {model_scores[name]:.4f}")

meta_learner = self._train_meta_learner(

trained_models, X_scaled, y

)

self.models = {

'base_models': trained_models,

'meta_learner': meta_learner,

'model_scores': model_scores

}

return self.models

def _prepare_training_data(self, data):

"""训练数据准备"""

features = []

targets = []

for symbol_data in data:

df = symbol_data['data']

feature_columns = [

'price_change', 'price_range', 'upper_shadow', 'lower_shadow',

'MA5_ratio', 'MA10_ratio', 'MA20_ratio',

'MACD', 'MACD_signal', 'MACD_hist',

'RSI', 'KDJ_K', 'KDJ_D', 'KDJ_J',

'volume_ratio', 'OBV'

]

window_size = 30

for i in range(window_size, len(df) - 5):

feature_window = df.iloc[i-window_size:i][feature_columns]

features.append(feature_window.values.flatten())

future_return = (

df['close'].iloc[i+5] / df['close'].iloc[i] - 1

)

targets.append(future_return)

return np.array(features), np.array(targets)

def _train_meta_learner(self, base_models, X, y):

"""元学习器训练 - 学习如何组合基础模型的预测"""

meta_features = []

for name, model in base_models.items():

predictions = model.predict(X)

meta_features.append(predictions)

meta_X = np.column_stack(meta_features)

meta_learner = LinearRegression()

meta_learner.fit(meta_X, y)

weights = meta_learner.coef_

model_names = list(base_models.keys())

logger.info("元学习器权重分配:")

for name, weight in zip(model_names, weights):

logger.info(f" {name}: {weight:.4f}")

return meta_learner

模型评估指标和优化方法

class ModelEvaluator:

"""模型评估器"""

def __init__(self):

self.metrics = {}

def comprehensive_evaluation(self, model, test_data):

"""综合模型评估"""

X_test, y_test = self._prepare_test_data(test_data)

predictions = model.predict(X_test)

regression_metrics = self._calculate_regression_metrics(

y_test, predictions

)

financial_metrics = self._calculate_financial_metrics(

y_test, predictions

)

stability_metrics = self._calculate_stability_metrics(

y_test, predictions

)

interpretability = self._analyze_feature_importance(model, X_test)

evaluation_result = {

'regression_metrics': regression_metrics,

'financial_metrics': financial_metrics,

'stability_metrics': stability_metrics,

'interpretability': interpretability,

'evaluation_date': datetime.now().isoformat()

}

return evaluation_result

def _calculate_financial_metrics(self, y_true, y_pred):

"""金融特定评估指标"""

direction_accuracy = np.mean(

np.sign(y_true) == np.sign(y_pred)

)

excess_return = y_pred - y_true

information_ratio = np.mean(excess_return) / np.std(excess_return)

cumulative_returns = np.cumprod(1 + y_pred)

running_max = np.maximum.accumulate(cumulative_returns)

drawdown = (cumulative_returns - running_max) / running_max

max_drawdown = np.min(drawdown)

sharpe_ratio = np.mean(y_pred) / np.std(y_pred) * np.sqrt(252)

return {

'direction_accuracy': direction_accuracy,

'information_ratio': information_ratio,

'max_drawdown': max_drawdown,

'sharpe_ratio': sharpe_ratio

}

def _analyze_feature_importance(self, model, X_test):

"""特征重要性分析"""

if hasattr(model, 'feature_importances_'):

importance = model.feature_importances_

else:

import shap

explainer = shap.Explainer(model)

shap_values = explainer(X_test[:100])

importance = np.abs(shap_values.values).mean(axis=0)

feature_names = self._get_feature_names()

feature_importance = dict(zip(feature_names, importance))

sorted_features = sorted(

feature_importance.items(),

key=lambda x: x[1],

reverse=True

)[:10]

return {

'top_features': sorted_features,

'feature_distribution': feature_importance

}

第三章:系统部署与运维

3.1 生产环境配置

生产环境的部署是系统能否稳定运行的关键。AIStock系统采用了容器化部署策略,确保环境一致性和可扩展性。

服务器架构设计

# docker-compose.yml - 生产环境配置

version: '3.8'

services:

# Web应用服务

aistock-web:

build:

context: .

dockerfile: Dockerfile.web

ports:

- "8080:8080"

environment:

- FLASK_ENV=production

- DATABASE_URL=sqlite:///data/stock_data.db

- REDIS_URL=redis://redis:6379/0

- LOG_LEVEL=INFO

volumes:

- ./data:/app/data

- ./logs:/app/logs

depends_on:

- redis

- data-collector

restart: unless-stopped

# 数据采集服务

data-collector:

build:

context: .

dockerfile: Dockerfile.collector

environment:

- TUSHARE_TOKEN=${TUSHARE_TOKEN}

- COLLECTION_INTERVAL=300 # 5分钟采集一次

- DATABASE_URL=sqlite:///data/stock_data.db

volumes:

- ./data:/app/data

- ./logs:/app/logs

restart: unless-stopped

# Redis缓存服务

redis:

image: redis:7-alpine

ports:

- "6379:6379"

volumes:

- redis_data:/data

restart: unless-stopped

# Nginx反向代理

nginx:

image: nginx:alpine

ports:

- "80:80"

- "443:443"

volumes:

- ./nginx.conf:/etc/nginx/nginx.conf

- ./ssl:/etc/nginx/ssl

depends_on:

- aistock-web

restart: unless-stopped

volumes:

redis_data:

性能调优配置

import os

from multiprocessing import cpu_count

class ProductionConfig:

"""生产环境配置类"""

DEBUG = False

TESTING = False

SECRET_KEY = os.environ.get('SECRET_KEY')

DATABASE_URL = os.environ.get('DATABASE_URL', 'sqlite:///data/stock_data.db')

DATABASE_POOL_SIZE = 20

DATABASE_POOL_TIMEOUT = 30

DATABASE_POOL_RECYCLE = 3600

REDIS_URL = os.environ.get('REDIS_URL', 'redis://localhost:6379/0')

REDIS_POOL_SIZE = 50

CACHE_TYPE = 'redis'

CACHE_DEFAULT_TIMEOUT = 300

CACHE_KEY_PREFIX = 'aistock:'

LOG_LEVEL = 'INFO'

LOG_FILE = '/app/logs/aistock.log'

LOG_MAX_SIZE = 100 * 1024 * 1024

LOG_BACKUP_COUNT = 10

WORKERS = cpu_count() * 2 + 1

WORKER_CONNECTIONS = 1000

MAX_REQUESTS = 1000

MAX_REQUESTS_JITTER = 100

TIMEOUT = 30

KEEPALIVE = 2

DATA_COLLECTION_INTERVAL = 300

MAX_CONCURRENT_REQUESTS = 10

REQUEST_TIMEOUT = 30

RETRY_ATTEMPTS = 3

RETRY_DELAY = 5

LLM_PROVIDER = os.environ.get('LLM_PROVIDER', 'local')

OPENAI_API_KEY = os.environ.get('OPENAI_API_KEY')

MODEL_CACHE_SIZE = 100

PREDICTION_CACHE_TTL = 1800

部署脚本示例

#!/bin/bash

set -e

PROJECT_NAME="aistock"

DEPLOY_DIR="/opt/aistock"

BACKUP_DIR="/opt/backups/aistock"

LOG_FILE="/var/log/aistock-deploy.log"

log() {

echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a $LOG_FILE

}

error_exit() {

log "ERROR: $1"

exit 1

}

check_dependencies() {

log "检查系统依赖..."

if ! command -v docker &> /dev/null; then

error_exit "Docker未安装"

fi

if ! command -v docker-compose &> /dev/null; then

error_exit "Docker Compose未安装"

fi

log "依赖检查完成"

}

backup_data() {

log "备份现有数据..."

if [ -d "$DEPLOY_DIR/data" ]; then

BACKUP_NAME="aistock-backup-$(date +%Y%m%d-%H%M%S)"

mkdir -p "$BACKUP_DIR"

cp -r "$DEPLOY_DIR/data" "$BACKUP_DIR/$BACKUP_NAME"

log "数据备份完成: $BACKUP_DIR/$BACKUP_NAME"

fi

}

deploy_application() {

log "开始部署应用..."

mkdir -p "$DEPLOY_DIR"

cd "$DEPLOY_DIR"

if [ -d ".git" ]; then

git pull origin main

else

git clone https://github.com/your-repo/aistock.git .

fi

log "构建Docker镜像..."

docker-compose build --no-cache

log "启动服务..."

docker-compose up -d

sleep 30

if curl -f http://localhost:8080/health > /dev/null 2>&1; then

log "应用部署成功"

else

error_exit "应用启动失败"

fi

}

migrate_database() {

log "执行数据库迁移..."

docker-compose exec aistock-web python manage.py db upgrade

log "数据库迁移完成"

}

optimize_performance() {

log "执行性能优化..."

docker-compose exec aistock-web python scripts/warm_cache.py

find /opt/aistock/logs -name "*.log" -mtime +30 -delete

log "性能优化完成"

}

main() {

log "开始AIStock系统部署"

check_dependencies

backup_data

deploy_application

migrate_database

optimize_performance

log "AIStock系统部署完成"

}

main "$@"

3.2 监控与维护

系统监控是保证服务稳定运行的重要保障。AIStock系统实现了全方位的监控体系。

系统监控方案

import psutil

import time

import logging

from datetime import datetime

from dataclasses import dataclass

from typing import Dict, List

import json

@dataclass

class SystemMetrics:

"""系统指标数据类"""

timestamp: str

cpu_percent: float

memory_percent: float

disk_usage: Dict[str, float]

network_io: Dict[str, int]

process_count: int

load_average: List[float]

class SystemMonitor:

"""系统监控器"""

def __init__(self, config=None):

self.config = config or {}

self.logger = logging.getLogger(__name__)

self.alert_thresholds = {

'cpu_percent': 80.0,

'memory_percent': 85.0,

'disk_usage': 90.0,

'load_average': 5.0

}

def collect_metrics(self) -> SystemMetrics:

"""收集系统指标"""

try:

cpu_percent = psutil.cpu_percent(interval=1)

memory = psutil.virtual_memory()

memory_percent = memory.percent

disk_usage = {}

for partition in psutil.disk_partitions():

try:

usage = psutil.disk_usage(partition.mountpoint)

disk_usage[partition.mountpoint] = usage.percent

except PermissionError:

continue

network = psutil.net_io_counters()

network_io = {

'bytes_sent': network.bytes_sent,

'bytes_recv': network.bytes_recv,

'packets_sent': network.packets_sent,

'packets_recv': network.packets_recv

}

process_count = len(psutil.pids())

load_average = list(psutil.getloadavg())

return SystemMetrics(

timestamp=datetime.now().isoformat(),

cpu_percent=cpu_percent,

memory_percent=memory_percent,

disk_usage=disk_usage,

network_io=network_io,

process_count=process_count,

load_average=load_average

)

except Exception as e:

self.logger.error(f"系统指标收集失败: {e}")

raise

def check_alerts(self, metrics: SystemMetrics) -> List[Dict]:

"""检查告警条件"""

alerts = []

if metrics.cpu_percent > self.alert_thresholds['cpu_percent']:

alerts.append({

'type': 'cpu_high',

'level': 'warning',

'message': f'CPU使用率过高: {metrics.cpu_percent:.1f}%',

'value': metrics.cpu_percent,

'threshold': self.alert_thresholds['cpu_percent']

})

if metrics.memory_percent > self.alert_thresholds['memory_percent']:

alerts.append({

'type': 'memory_high',

'level': 'warning',

'message': f'内存使用率过高: {metrics.memory_percent:.1f}%',

'value': metrics.memory_percent,

'threshold': self.alert_thresholds['memory_percent']

})

for mount_point, usage in metrics.disk_usage.items():

if usage > self.alert_thresholds['disk_usage']:

alerts.append({

'type': 'disk_high',

'level': 'critical',

'message': f'磁盘使用率过高 {mount_point}: {usage:.1f}%',

'value': usage,

'threshold': self.alert_thresholds['disk_usage']

})

if metrics.load_average[0] > self.alert_thresholds['load_average']:

alerts.append({

'type': 'load_high',

'level': 'warning',

'message': f'系统负载过高: {metrics.load_average[0]:.2f}',

'value': metrics.load_average[0],

'threshold': self.alert_thresholds['load_average']

})

return alerts

class ApplicationMonitor:

"""应用监控器"""

def __init__(self, app_name="aistock"):

self.app_name = app_name

self.logger = logging.getLogger(__name__)

def check_service_health(self) -> Dict:

"""检查服务健康状态"""

health_status = {

'timestamp': datetime.now().isoformat(),

'services': {}

}

try:

import requests

response = requests.get('http://localhost:8080/health', timeout=5)

health_status['services']['web'] = {

'status': 'healthy' if response.status_code == 200 else 'unhealthy',

'response_time': response.elapsed.total_seconds(),

'status_code': response.status_code

}

except Exception as e:

health_status['services']['web'] = {

'status': 'unhealthy',

'error': str(e)

}

try:

import sqlite3

conn = sqlite3.connect('./data/stock_data.db', timeout=5)

cursor = conn.cursor()

cursor.execute('SELECT 1')

conn.close()

health_status['services']['database'] = {'status': 'healthy'}

except Exception as e:

health_status['services']['database'] = {

'status': 'unhealthy',

'error': str(e)

}

try:

import redis

r = redis.Redis(host='localhost', port=6379, db=0)

r.ping()

health_status['services']['redis'] = {'status': 'healthy'}

except Exception as e:

health_status['services']['redis'] = {

'status': 'unhealthy',

'error': str(e)

}

return health_status

异常处理机制

import traceback

import logging

from datetime import datetime

from enum import Enum

from typing import Optional, Dict, Any

import smtplib

from email.mime.text import MIMEText

from email.mime.multipart import MIMEMultipart

class ErrorLevel(Enum):

"""错误级别枚举"""

INFO = "info"

WARNING = "warning"

ERROR = "error"

CRITICAL = "critical"

class ExceptionHandler:

"""异常处理器"""

def __init__(self, config=None):

self.config = config or {}

self.logger = logging.getLogger(__name__)

self.error_counts = {}

self.notification_config = self.config.get('notifications', {})

def handle_exception(self,

exception: Exception,

context: Dict[str, Any] = None,

level: ErrorLevel = ErrorLevel.ERROR) -> None:

"""统一异常处理"""

error_info = {

'timestamp': datetime.now().isoformat(),

'exception_type': type(exception).__name__,

'exception_message': str(exception),

'traceback': traceback.format_exc(),

'context': context or {},

'level': level.value

}

self._log_error(error_info)

self._update_error_stats(error_info)

if level in [ErrorLevel.ERROR, ErrorLevel.CRITICAL]:

self._send_alert(error_info)

if level == ErrorLevel.CRITICAL:

self._attempt_recovery(error_info)

def _log_error(self, error_info: Dict) -> None:

"""记录错误日志"""

log_message = (

f"[{error_info['level'].upper()}] "

f"{error_info['exception_type']}: "

f"{error_info['exception_message']}"

)

if error_info['level'] == ErrorLevel.CRITICAL.value:

self.logger.critical(log_message)

elif error_info['level'] == ErrorLevel.ERROR.value:

self.logger.error(log_message)

elif error_info['level'] == ErrorLevel.WARNING.value:

self.logger.warning(log_message)

else:

self.logger.info(log_message)

self.logger.debug(f"错误详情: {error_info}")

def _send_alert(self, error_info: Dict) -> None:

"""发送告警通知"""

if not self.notification_config.get('enabled', False):

return

try:

if self.notification_config.get('email'):

self._send_email_alert(error_info)

if self.notification_config.get('dingtalk'):

self._send_dingtalk_alert(error_info)

except Exception as e:

self.logger.error(f"发送告警通知失败: {e}")

def _send_email_alert(self, error_info: Dict) -> None:

"""发送邮件告警"""

email_config = self.notification_config['email']

msg = MIMEMultipart()

msg['From'] = email_config['from']

msg['To'] = ', '.join(email_config['to'])

msg['Subject'] = f"AIStock系统告警 - {error_info['exception_type']}"

body = f"""

系统发生异常,详情如下:

时间: {error_info['timestamp']}

级别: {error_info['level']}

异常类型: {error_info['exception_type']}

异常信息: {error_info['exception_message']}

上下文信息:

{json.dumps(error_info['context'], indent=2, ensure_ascii=False)}

堆栈跟踪:

{error_info['traceback']}

"""

msg.attach(MIMEText(body, 'plain', 'utf-8'))

server = smtplib.SMTP(email_config['smtp_server'], email_config['smtp_port'])

server.starttls()

server.login(email_config['username'], email_config['password'])

server.send_message(msg)

server.quit()

def _attempt_recovery(self, error_info: Dict) -> None:

"""尝试自动恢复"""

exception_type = error_info['exception_type']

recovery_strategies = {

'ConnectionError': self._recover_connection,

'DatabaseError': self._recover_database,

'MemoryError': self._recover_memory,

'TimeoutError': self._recover_timeout

}

recovery_func = recovery_strategies.get(exception_type)

if recovery_func:

try:

recovery_func(error_info)

self.logger.info(f"自动恢复成功: {exception_type}")

except Exception as e:

self.logger.error(f"自动恢复失败: {e}")

def _recover_connection(self, error_info: Dict) -> None:

"""连接错误恢复"""

import subprocess

subprocess.run(['docker-compose', 'restart', 'redis'], check=True)

time.sleep(5)

def _recover_database(self, error_info: Dict) -> None:

"""数据库错误恢复"""

import sqlite3

try:

conn = sqlite3.connect('./data/stock_data.db')

conn.execute('PRAGMA integrity_check')

conn.close()

except Exception:

import shutil

shutil.copy('./backup/stock_data.db', './data/stock_data.db')

def _recover_memory(self, error_info: Dict) -> None:

"""内存错误恢复"""

import gc

gc.collect()

import os

os.system('docker-compose restart aistock-web')

第四章:应用案例与性能分析

4.1 实际业务场景

AIStock系统在实际应用中展现了强大的分析能力和实用价值。以下是几个典型的应用场景和效果分析。

典型应用案例

案例一:科技股趋势分析

def analyze_tech_stock_case():

"""科技股分析案例"""

tech_stocks = ['000001.SZ', '000002.SZ', '600036.SH', '600519.SH']

analysis_results = {}

for symbol in tech_stocks:

stock_data = data_engine.get_stock_data(symbol, days=30)

result = prediction_engine.comprehensive_analysis(symbol)

technical_signals = {

'MACD_signal': 'BUY' if result['technical_analysis']['MACD_bullish'] else 'SELL',

'RSI_signal': 'OVERSOLD' if result['technical_analysis']['RSI'] < 30 else

'OVERBOUGHT' if result['technical_analysis']['RSI'] > 70 else 'NEUTRAL',

'KDJ_signal': 'GOLDEN_CROSS' if result['technical_analysis']['KDJ_golden_cross'] else 'NORMAL'

}

ai_insights = result['ai_analysis']

analysis_results[symbol] = {

'prediction': result['prediction'],

'confidence': result['confidence'],

'technical_signals': technical_signals,

'ai_insights': ai_insights,

'risk_assessment': calculate_risk_metrics(stock_data)

}

return analysis_results

def calculate_risk_metrics(stock_data):

"""计算风险指标"""

returns = stock_data['close'].pct_change().dropna()

var_95 = np.percentile(returns, 5)

var_99 = np.percentile(returns, 1)

cumulative = (1 + returns).cumprod()

running_max = cumulative.expanding().max()

drawdown = (cumulative - running_max) / running_max

max_drawdown = drawdown.min()

volatility = returns.std() * np.sqrt(252)

return {

'var_95': var_95,

'var_99': var_99,

'max_drawdown': max_drawdown,

'volatility': volatility,

'sharpe_ratio': returns.mean() / returns.std() * np.sqrt(252)

}

效果对比数据

通过对比传统分析方法和AIStock系统的预测效果,我们得到了以下数据:

4.2 性能优化经验

在系统运行过程中,我们积累了丰富的性能优化经验,这些经验对于类似系统的开发具有重要参考价值。

系统调优实践

class PerformanceOptimizer:

"""性能优化器"""

def __init__(self):

self.cache_manager = CacheManager()

self.db_optimizer = DatabaseOptimizer()

self.memory_manager = MemoryManager()

def optimize_data_processing(self):

"""数据处理性能优化"""

def batch_process_stocks(symbols, batch_size=50):

"""批量处理股票数据"""

results = []

for i in range(0, len(symbols), batch_size):

batch = symbols[i:i + batch_size]

with ThreadPoolExecutor(max_workers=10) as executor:

futures = [

executor.submit(self.process_single_stock, symbol)

for symbol in batch

]

batch_results = [

future.result() for future in futures

]

results.extend(batch_results)

time.sleep(0.1)

return results

def optimize_dataframe_operations(df):

"""DataFrame操作优化"""

df['price_change'] = df['close'].pct_change()

df['volatility'] = df['price_change'].rolling(20).std()

@numba.jit(nopython=True)

def fast_rsi_calculation(prices, window=14):

"""快速RSI计算"""

deltas = np.diff(prices)

gains = np.where(deltas > 0, deltas, 0)

losses = np.where(deltas < 0, -deltas, 0)

avg_gains = np.convolve(gains, np.ones(window)/window, mode='valid')

avg_losses = np.convolve(losses, np.ones(window)/window, mode='valid')

rs = avg_gains / avg_losses

rsi = 100 - (100 / (1 + rs))

return rsi

df['RSI_fast'] = fast_rsi_calculation(df['close'].values)

return df

def optimize_memory_usage(df):

"""内存使用优化"""

for col in df.select_dtypes(include=['float64']).columns:

df[col] = pd.to_numeric(df[col], downcast='float')

for col in df.select_dtypes(include=['int64']).columns:

df[col] = pd.to_numeric(df[col], downcast='integer')

unnecessary_cols = [col for col in df.columns if col.startswith('temp_')]

df = df.drop(columns=unnecessary_cols)

return df

def optimize_database_queries(self):

"""数据库查询优化"""

def create_optimized_indexes():

"""创建优化索引"""

indexes = [

"CREATE INDEX IF NOT EXISTS idx_symbol_date ON stock_data(symbol, date)",

"CREATE INDEX IF NOT EXISTS idx_date ON stock_data(date)",

"CREATE INDEX IF NOT EXISTS idx_symbol ON stock_data(symbol)",

"CREATE INDEX IF NOT EXISTS idx_volume ON stock_data(volume)"

]

conn = sqlite3.connect('./data/stock_data.db')

for index_sql in indexes:

conn.execute(index_sql)

conn.commit()

conn.close()

def optimized_query_builder(symbol, start_date, end_date):

"""优化的查询构建器"""

query = """

SELECT symbol, date, open, close, high, low, volume

FROM stock_data

WHERE symbol = ? AND date BETWEEN ? AND ?

ORDER BY date ASC

"""

return query, (symbol, start_date, end_date)

def setup_connection_pool():

"""设置数据库连接池"""

from sqlalchemy import create_engine

from sqlalchemy.pool import StaticPool

engine = create_engine(

'sqlite:///data/stock_data.db',

poolclass=StaticPool,

pool_size=20,

max_overflow=30,

pool_timeout=30,

pool_recycle=3600,

echo=False

)

return engine

**测试环境配置:**

- CPU: Intel i7-10700K (8核16线程)

- 内存: 32GB DDR4-3200

- 存储: 1TB NVMe SSD

- 操作系统: Ubuntu 20.04 LTS

**性能基准测试结果:**

```python

def performance_benchmark():

"""性能基准测试"""

test_results = {}

start_time = time.time()

symbols = get_all_stock_symbols()[:1000]

processed_data = batch_process_stocks(symbols)

processing_time = time.time() - start_time

test_results['data_processing'] = {

'stocks_count': 1000,

'days_per_stock': 30,

'total_time': processing_time,

'stocks_per_second': 1000 / processing_time

}

start_time = time.time()

predictions = []

for symbol in symbols[:100]:

prediction = prediction_engine.comprehensive_analysis(symbol)

predictions.append(prediction)

prediction_time = time.time() - start_time

test_results['prediction_performance'] = {

'predictions_count': 100,

'total_time': prediction_time,

'predictions_per_second': 100 / prediction_time,

'avg_time_per_prediction': prediction_time / 100

}

start_time = time.time()

for _ in range(1000):

query_stock_data('000001.SZ', '2024-01-01', '2024-01-31')

query_time = time.time() - start_time

test_results['database_performance'] = {

'queries_count': 1000,

'total_time': query_time,

'queries_per_second': 1000 / query_time

}

return test_results

benchmark_results = {

'data_processing': {

'stocks_count': 1000,

'days_per_stock': 30,

'total_time': 45.2,

'stocks_per_second': 22.1

},

'prediction_performance': {

'predictions_count': 100,

'total_time': 12.8,

'predictions_per_second': 7.8,

'avg_time_per_prediction': 0.128

},

'database_performance': {

'queries_count': 1000,

'total_time': 2.3,

'queries_per_second': 434.8

}

}

内存使用优化效果:

第五章:开发指南与最佳实践

5.1 API使用说明

AIStock系统提供了完整的RESTful API接口,方便开发者进行二次开发和系统集成。

核心API接口详解

from flask import Flask, request, jsonify

from flask_restful import Api, Resource

from datetime import datetime, timedelta

import json

app = Flask(__name__)

api = Api(app)

class StockDataAPI(Resource):

"""股票数据API"""

def get(self, symbol):

"""获取股票数据

参数:

symbol: 股票代码 (如: 000001.SZ)

start_date: 开始日期 (可选, 格式: YYYY-MM-DD)

end_date: 结束日期 (可选, 格式: YYYY-MM-DD)

fields: 返回字段 (可选, 逗号分隔)

返回:

JSON格式的股票数据

"""

try:

start_date = request.args.get('start_date')

end_date = request.args.get('end_date')

fields = request.args.get('fields', 'all')

if not end_date:

end_date = datetime.now().strftime('%Y-%m-%d')

if not start_date:

start_date = (datetime.now() - timedelta(days=30)).strftime('%Y-%m-%d')

stock_data = data_engine.get_stock_data(symbol, start_date, end_date)

if fields != 'all':

field_list = fields.split(',')

stock_data = stock_data[field_list]

result = {

'symbol': symbol,

'start_date': start_date,

'end_date': end_date,

'data_count': len(stock_data),

'data': stock_data.to_dict('records')

}

return jsonify({

'status': 'success',

'result': result,

'timestamp': datetime.now().isoformat()

})

except Exception as e:

return jsonify({

'status': 'error',

'message': str(e),

'timestamp': datetime.now().isoformat()

}), 400

class PredictionAPI(Resource):

"""预测分析API"""

def post(self):

"""股票预测分析

请求体:

{

"symbol": "000001.SZ",

"analysis_type": "comprehensive",

"days": 30,

"include_technical": true,

"include_ai": true

}

返回:

预测分析结果

"""

try:

data = request.get_json()

required_fields = ['symbol']

for field in required_fields:

if field not in data:

return jsonify({

'status': 'error',

'message': f'缺少必要参数: {field}'

}), 400

symbol = data['symbol']

analysis_type = data.get('analysis_type', 'comprehensive')

days = data.get('days', 30)

include_technical = data.get('include_technical', True)

include_ai = data.get('include_ai', True)

if analysis_type == 'comprehensive':

result = prediction_engine.comprehensive_analysis(

symbol, days=days

)

elif analysis_type == 'technical':

result = prediction_engine.technical_analysis_only(

symbol, days=days

)

elif analysis_type == 'ai':

result = prediction_engine.ai_analysis_only(

symbol, days=days

)

else:

return jsonify({

'status': 'error',

'message': f'不支持的分析类型: {analysis_type}'

}), 400

return jsonify({

'status': 'success',

'result': result,

'timestamp': datetime.now().isoformat()

})

except Exception as e:

return jsonify({

'status': 'error',

'message': str(e),

'timestamp': datetime.now().isoformat()

}), 500

api.add_resource(StockDataAPI, '/api/stock/<string:symbol>')

api.add_resource(PredictionAPI, '/api/prediction')

def api_usage_examples():

"""API使用示例代码"""

import requests

import json

base_url = "http://localhost:8080"

def get_stock_data_example():

"""获取股票数据示例"""

url = f"{base_url}/api/stock/000001.SZ"

params = {

'start_date': '2024-01-01',

'end_date': '2024-01-31',

'fields': 'date,open,close,high,low,volume'

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print("股票数据获取成功:")

print(f"数据条数: {data['result']['data_count']}")

print(f"最新价格: {data['result']['data'][-1]['close']}")

else:

print(f"请求失败: {response.status_code}")

print(response.text)

def prediction_analysis_example():

"""预测分析示例"""

url = f"{base_url}/api/prediction"

payload = {

"symbol": "000001.SZ",

"analysis_type": "comprehensive",

"days": 30,

"include_technical": True,

"include_ai": True

}

headers = {'Content-Type': 'application/json'}

response = requests.post(url, data=json.dumps(payload), headers=headers)

if response.status_code == 200:

result = response.json()

prediction = result['result']

print("预测分析结果:")

print(f"预测结论: {prediction['prediction']}")

print(f"置信度: {prediction['confidence']:.2%}")

print(f"技术分析: {prediction['technical_analysis']}")

print(f"AI分析: {prediction['ai_analysis']}")

else:

print(f"预测请求失败: {response.status_code}")

print(response.text)

def batch_analysis_example():

"""批量分析示例"""

symbols = ['000001.SZ', '000002.SZ', '600036.SH']

results = {}

for symbol in symbols:

payload = {

"symbol": symbol,

"analysis_type": "comprehensive",

"days": 30

}

response = requests.post(

f"{base_url}/api/prediction",

json=payload

)

if response.status_code == 200:

results[symbol] = response.json()['result']

else:

print(f"分析失败: {symbol}")

print("批量分析结果汇总:")

for symbol, result in results.items():

print(f"{symbol}: {result['prediction']} (置信度: {result['confidence']:.2%})")

return {

'get_stock_data': get_stock_data_example,

'prediction_analysis': prediction_analysis_example,

'batch_analysis': batch_analysis_example

}

5.2 二次开发建议

为了帮助开发者更好地基于AIStock系统进行二次开发,我们提供了详细的开发指南和最佳实践。

扩展开发指导

class CustomAnalyzer:

"""自定义分析器基类"""

def __init__(self, config=None):

self.config = config or {}

self.name = self.__class__.__name__

def analyze(self, stock_data, **kwargs):

"""分析方法 - 子类必须实现"""

raise NotImplementedError("子类必须实现analyze方法")

def validate_data(self, stock_data):

"""数据验证"""

required_columns = ['open', 'close', 'high', 'low', 'volume']

missing_columns = [col for col in required_columns if col not in stock_data.columns]

if missing_columns:

raise ValueError(f"数据缺少必要字段: {missing_columns}")

if len(stock_data) < 20:

raise ValueError("数据量不足,至少需要20个交易日的数据")

return True

class SentimentAnalyzer(CustomAnalyzer):

"""情感分析器 - 基于新闻和社交媒体数据"""

def __init__(self, config=None):

super().__init__(config)

self.news_sources = config.get('news_sources', [])

self.sentiment_model = self._load_sentiment_model()

def analyze(self, stock_data, symbol=None, **kwargs):

"""情感分析主方法"""

news_data = self._fetch_news_data(symbol)

sentiment_scores = self._analyze_sentiment(news_data)

social_sentiment = self._analyze_social_sentiment(symbol)

overall_sentiment = self._calculate_overall_sentiment(

sentiment_scores, social_sentiment

)

return {

'sentiment_score': overall_sentiment,

'news_sentiment': sentiment_scores,

'social_sentiment': social_sentiment,

'sentiment_trend': self._calculate_sentiment_trend(sentiment_scores),

'confidence': self._calculate_confidence(sentiment_scores)

}

def _fetch_news_data(self, symbol):

"""获取新闻数据"""

pass

def _analyze_sentiment(self, news_data):

"""新闻情感分析"""

pass

def _load_sentiment_model(self):

"""加载情感分析模型"""

pass

class VolatilityAnalyzer(CustomAnalyzer):

"""波动率分析器 - 高级波动率建模"""

def analyze(self, stock_data, **kwargs):

"""波动率分析"""

historical_vol = self._calculate_historical_volatility(stock_data)

garch_vol = self._garch_volatility_forecast(stock_data)

implied_vol = self._calculate_implied_volatility(stock_data)

vol_regime = self._volatility_regime_analysis(stock_data)

return {

'historical_volatility': historical_vol,

'garch_forecast': garch_vol,

'implied_volatility': implied_vol,

'volatility_regime': vol_regime,

'volatility_percentile': self._calculate_vol_percentile(historical_vol)

}

def _garch_volatility_forecast(self, stock_data):

"""GARCH模型波动率预测"""

from arch import arch_model

returns = stock_data['close'].pct_change().dropna() * 100

model = arch_model(returns, vol='Garch', p=1, q=1)

fitted_model = model.fit(disp='off')

forecast = fitted_model.forecast(horizon=5)

return {

'model_params': fitted_model.params.to_dict(),

'forecast_variance': forecast.variance.iloc[-1].tolist(),

'forecast_volatility': np.sqrt(forecast.variance.iloc[-1]).tolist()

}

class PluginManager:

"""插件管理器"""

def __init__(self):

self.analyzers = {}

self.hooks = {}

def register_analyzer(self, name, analyzer_class):

"""注册分析器"""

if not issubclass(analyzer_class, CustomAnalyzer):

raise ValueError("分析器必须继承CustomAnalyzer类")

self.analyzers[name] = analyzer_class

print(f"分析器 {name} 注册成功")

def get_analyzer(self, name, config=None):

"""获取分析器实例"""

if name not in self.analyzers:

raise ValueError(f"未找到分析器: {name}")

return self.analyzers[name](config)

def register_hook(self, event, callback):

"""注册事件钩子"""

if event not in self.hooks:

self.hooks[event] = []

self.hooks[event].append(callback)

def trigger_hook(self, event, *args, **kwargs):

"""触发事件钩子"""

if event in self.hooks:

for callback in self.hooks[event]:

try:

callback(*args, **kwargs)

except Exception as e:

print(f"钩子执行失败: {e}")

plugin_manager = PluginManager()

plugin_manager.register_analyzer('sentiment', SentimentAnalyzer)

plugin_manager.register_analyzer('volatility', VolatilityAnalyzer)

sentiment_analyzer = plugin_manager.get_analyzer('sentiment', {

'news_sources': ['sina', 'eastmoney', 'cnstock']

})

volatility_analyzer = plugin_manager.get_analyzer('volatility')

开发注意事项

1. 数据一致性保证

def ensure_data_consistency():

"""确保数据一致性的最佳实践"""

validation_rules = {

'price_validation': lambda df: (df['high'] >= df['low']).all(),

'volume_validation': lambda df: (df['volume'] >= 0).all(),

'date_validation': lambda df: df['date'].is_monotonic_increasing,

'completeness_validation': lambda df: df.isnull().sum().sum() == 0

}

def repair_data_inconsistencies(df):

"""修复数据不一致问题"""

invalid_price_mask = df['high'] < df['low']

if invalid_price_mask.any():

df.loc[invalid_price_mask, ['high', 'low']] = df.loc[invalid_price_mask, ['low', 'high']].values

df.loc[df['volume'] < 0, 'volume'] = 0

df = df.fillna(method='ffill').fillna(method='bfill')

return df

return validation_rules, repair_data_inconsistencies

2. 性能优化建议

class PerformanceBestPractices:

"""性能优化最佳实践"""

@staticmethod

def optimize_pandas_operations():

"""Pandas操作优化"""

def vectorized_calculation(df):

df['returns'] = df['close'].pct_change()

df['ma20'] = df['close'].rolling(20).mean()

def optimize_memory_usage(df):

for col in df.select_dtypes(include=['float64']):

df[col] = pd.to_numeric(df[col], downcast='float')

chunk_size = 10000

for chunk in pd.read_csv('large_file.csv', chunksize=chunk_size):

process_chunk(chunk)

from functools import lru_cache

@lru_cache(maxsize=128)

def cached_calculation(symbol, start_date, end_date):

return expensive_calculation(symbol, start_date, end_date)

@staticmethod

def database_optimization():

"""数据库优化建议"""

def batch_insert(data_list):

conn = sqlite3.connect('stock_data.db')

conn.executemany(

"INSERT INTO stock_data VALUES (?, ?, ?, ?, ?, ?)",

data_list

)

conn.commit()

conn.close()

def transactional_operation():

conn = sqlite3.connect('stock_data.db')

try:

conn.execute("BEGIN TRANSACTION")

conn.execute("INSERT INTO ...")

conn.execute("UPDATE ...")

conn.execute("COMMIT")

except Exception as e:

conn.execute("ROLLBACK")

raise e

finally:

conn.close()

第六章:系统安全与合规

6.1 数据安全保护

在金融数据处理系统中,数据安全是至关重要的。AIStock系统实现了多层次的安全防护机制。

import hashlib

import hmac

import secrets

from cryptography.fernet import Fernet

from cryptography.hazmat.primitives import hashes

from cryptography.hazmat.primitives.kdf.pbkdf2 import PBKDF2HMAC

import base64

class DataEncryption:

"""数据加密管理器"""

def __init__(self, master_key=None):

self.master_key = master_key or self._generate_master_key()

self.cipher_suite = self._create_cipher_suite()

def _generate_master_key(self):

"""生成主密钥"""

return Fernet.generate_key()

def _create_cipher_suite(self):

"""创建加密套件"""

return Fernet(self.master_key)

def encrypt_sensitive_data(self, data):

"""加密敏感数据"""

if isinstance(data, str):

data = data.encode('utf-8')

encrypted_data = self.cipher_suite.encrypt(data)

return base64.b64encode(encrypted_data).decode('utf-8')

def decrypt_sensitive_data(self, encrypted_data):

"""解密敏感数据"""

encrypted_data = base64.b64decode(encrypted_data.encode('utf-8'))

decrypted_data = self.cipher_suite.decrypt(encrypted_data)

return decrypted_data.decode('utf-8')

class AccessControl:

"""访问控制管理器"""

def __init__(self):

self.api_keys = {}

self.rate_limits = {}

self.access_logs = []

def generate_api_key(self, user_id, permissions=None):

"""生成API密钥"""

api_key = secrets.token_urlsafe(32)

self.api_keys[api_key] = {

'user_id': user_id,

'permissions': permissions or ['read'],

'created_at': datetime.now(),

'last_used': None,

'usage_count': 0

}

return api_key

def validate_api_key(self, api_key):

"""验证API密钥"""

if api_key not in self.api_keys:

return False, "无效的API密钥"

key_info = self.api_keys[api_key]

key_info['last_used'] = datetime.now()

key_info['usage_count'] += 1

return True, key_info

def check_rate_limit(self, api_key, endpoint):

"""检查访问频率限制"""

rate_key = f"{api_key}:{endpoint}"

current_time = time.time()

if rate_key not in self.rate_limits:

self.rate_limits[rate_key] = []

self.rate_limits[rate_key] = [

timestamp for timestamp in self.rate_limits[rate_key]

if current_time - timestamp < 3600

]

if len(self.rate_limits[rate_key]) >= 1000:

return False, "访问频率超限"

self.rate_limits[rate_key].append(current_time)

return True, "访问允许"

6.2 合规性要求

金融数据系统需要遵守相关的法律法规和行业标准。

class ComplianceManager:

"""合规管理器"""

def __init__(self):

self.audit_logs = []

self.compliance_rules = self._load_compliance_rules()

def _load_compliance_rules(self):

"""加载合规规则"""

return {

'data_retention': {

'max_days': 2555,

'backup_required': True

},

'access_logging': {

'log_all_access': True,

'log_retention_days': 365

},

'data_privacy': {

'anonymize_user_data': True,

'encrypt_sensitive_fields': True

}

}

def log_data_access(self, user_id, data_type, action, details=None):

"""记录数据访问日志"""

log_entry = {

'timestamp': datetime.now().isoformat(),

'user_id': user_id,

'data_type': data_type,

'action': action,

'details': details or {},

'ip_address': self._get_client_ip(),

'user_agent': self._get_user_agent()

}

self.audit_logs.append(log_entry)

self._persist_audit_log(log_entry)

def check_data_retention_compliance(self):

"""检查数据保留合规性"""

max_retention_days = self.compliance_rules['data_retention']['max_days']

cutoff_date = datetime.now() - timedelta(days=max_retention_days)

expired_data = self._find_expired_data(cutoff_date)

if expired_data:

self._archive_expired_data(expired_data)

return len(expired_data)

第七章:未来发展方向

7.1 技术演进路线

AIStock系统的技术演进将围绕以下几个核心方向展开:

1. 深度学习模型升级

class NextGenPredictionEngine:

"""下一代预测引擎"""

def __init__(self):

self.transformer_model = self._load_transformer_model()

self.graph_neural_network = self._load_gnn_model()

self.reinforcement_learning_agent = self._load_rl_agent()

def _load_transformer_model(self):

"""加载Transformer模型用于时间序列预测"""

pass

def _load_gnn_model(self):

"""加载图神经网络模型"""

pass

def _load_rl_agent(self):

"""加载强化学习智能体"""

pass

2. 实时流处理架构

class RealTimeStreamProcessor:

"""实时流处理器"""

def __init__(self):

self.kafka_consumer = self._setup_kafka_consumer()

self.redis_stream = self._setup_redis_stream()

self.websocket_manager = self._setup_websocket()

def process_real_time_data(self):

"""处理实时数据流"""

pass

7.2 功能扩展计划

多资产类别支持

- 扩展到期货、期权、债券等金融工具

- 支持加密货币市场分析

- 国际市场数据集成

智能投顾功能

- 个性化投资建议生成

- 风险偏好评估和匹配

- 投资组合优化算法

社交化交易功能

- 投资者情绪分析

- 社交媒体数据挖掘

- 跟单交易系统

结语

总结

通过对AIStock系统的深度技术解析,我们可以看到,这个开源项目不仅仅是一个简单的股票分析工具,更是现代金融科技发展的一个缩影。它成功地将传统的技术分析方法与前沿的人工智能技术相结合,为个人投资者提供了专业级的分析能力。

系统核心价值回顾:

- 技术创新性:系统采用了模块化架构设计,集成了多种数据源、多种分析算法和多种AI模型,展现了现代软件工程的最佳实践。

- 实用性:通过实际的性能测试数据,我们看到系统在预测准确率、风险控制等关键指标上都有显著提升,具有很强的实用价值。

- 可扩展性:完善的插件系统和API接口设计,为二次开发提供了良好的基础,开发者可以根据自己的需求进行定制化开发。

- 开源精神:作为开源项目,AIStock为整个金融科技社区贡献了宝贵的技术资源和实践经验。

思考

尽管AIStock系统展现了强大的技术能力,但我们也必须认识到当前技术的局限性:

数据质量依赖:系统的预测能力很大程度上依赖于数据的质量和完整性。在数据源出现问题时,预测准确性会受到影响。

市场环境适应性:金融市场具有高度的复杂性和不确定性,任何预测模型都无法保证100%的准确性,特别是在极端市场条件下。

监管合规挑战:随着金融监管的不断加强,系统需要持续更新以满足合规要求,这对技术团队提出了更高的要求。

技术门槛:虽然系统提供了友好的界面,但要充分发挥其潜力,用户仍需要具备一定的金融知识和技术背景。

展望未来

展望未来,我们可以预见AIStock系统将在以下几个方向继续演进:

技术层面:

- 更先进的深度学习模型将被引入,提高预测的准确性和稳定性

- 实时流处理能力将得到增强,支持更高频的交易场景

- 多模态数据融合将成为趋势,整合文本、图像、音频等多种数据源

应用层面:

- 从单一的股票分析扩展到全资产类别的投资分析

- 从个人投资者工具发展为机构级的投资决策支持系统

- 从被动的分析工具演进为主动的智能投顾服务

生态层面:

- 更多的开发者将参与到项目中来,形成活跃的开源社区

- 与更多的金融机构和数据提供商建立合作关系

- 推动整个行业向更加开放、透明的方向发展

社会影响:

- 降低专业投资分析的门槛,让更多普通投资者受益

- 促进金融市场的信息透明度和效率提升

- 推动金融科技的普及和发展

最后,我想说的是,AIStock系统的成功不仅在于其技术的先进性,更在于其体现的开放、共享、创新的精神。在这个快速变化的时代,只有保持开放的心态,拥抱新技术,才能在激烈的竞争中立于不败之地。

对于每一位金融科技的从业者和爱好者,我们都应该从AIStock系统中汲取经验和灵感,不断学习、不断创新,为构建更加智能、高效、公平的金融生态系统贡献自己的力量。

技术的发展永无止境,金融市场的变化也永不停歇。但正是这种不确定性,给了我们无限的创新空间和发展机遇。让我们携手前行,在AI与金融融合的道路上,创造更加美好的未来。

本文基于AIStock开源项目的技术分析,旨在为金融科技从业者提供技术参考和实践指导。文中涉及的代码示例和技术方案仅供学习交流使用,实际应用时请根据具体需求进行调整和优化。

作者简介:资深 AI 开发工程师,专注于 AI 应用层开发,拥有多年的 AI 开发和 Java 开发经验。